A challenge in managing HTTP flows (SOAP/HTTP, REST and plain HTTP) is that there can be peaks of activity at certain times, where HTTP requests are received at a high throughput, and while the HTTP protocol is by nature synchronous with no way to buffer incoming requests.

This means that the different components managing the flows need to be correctly sized and configured to be able to handle peak volumes and possibly even able to reject incoming HTTP requests that would be received after a given threshold would be exceeded.

Without proper sizing and configuration the following problems are likely to be seen during peak activity times : significantly degraded response times, increase in error and time-out rates, out of memory errors and even waste of resources in back-end components (when a request is processed and the client times out before the response is sent).

Some readers may think that flow control should be managed with the throttling capability of an API Gateway solution. I agree with this but not all companies have such solution in place, especially in the case of internal flows.

While BusinessWorks 5.X is still used by many TIBCO customers to expose plain HTTP services, WEB Services and REST Services and while I recently discovered that few people knows the properties available in TIBCO BusinessWorks 5.X to manage HTTP flows I thought this would be useful to share my experience in that area.

How to know better what is going on

One important thing before adjusting HTTP configurations in BusinessWorks is to know how to check the load and identify where time is spend in the processes executions, in other words identify the activities where most of the time is spent.

These elements are simply available in TIBCO Administrator.

Using the ‘Service Instances -> BW Processes -> Active Processes’ Panel, and using the search button periodically, you can easily find the following elements :

. How many processes instances are running at the time you are looking

. Which are the activities that are consuming time

In the example below you can see that there are 31 process instances running, and 10 of them are waiting on the ‘CreateOrder’ activity. You can also see that the ‘BasketPricing’ activity is active since 9520 milli-secondes.

Using the ‘Service Instances -> BW Processes -> Process Definitions’ Panel you can get statistics on process executions (since the corresponding BusinessWorks engine was started):

. How many times a given processes was executed

. How many times a given activity of a given processes was executed

. How many milli-seconds were spent in a given activity with both elapsed time and cpu time

This is useful to calculate the average execution time of a given activity.

In the example below we can see more details on the OrderCreate process and the CreateOrder activity, you can see that the process and this activity got executed 119070 times.

The total time spent in the CreateOrder activity is 1180949156 milli-seconds and then the average execution times is 1180949156 / 119070 = 9.9 seconds. We can also see that the total CPU time is 364430 milli-seconds so in average 3 milli-seconds. This shows that most of the time is spent waiting for the SOAP invocation to complete, this time is made of the time to get a thread to actually invoke the service, the time to reach the back-end, the processing time in the back-end and finally the time for the back-end response to come back to BusinessWorks.

How to adjust the configuration to manage the incoming HTTP request

This part is well known while the main properties available to configure the elements managing the input flows are exposed in TIBCO Administrator.

Meanwhile there is a common misunderstanding about one of the property that this ‘Max Job’, this property is related to a mechanism that is available since the very first release of BusinessWorks to control incoming flows for any transport. The ‘Max Job’ property defines the number of process instances that can be created for a given process starter, when this limit is reached and new events are received process instances are created, serialized and then written to disk without being executed, then when the number of process instances goes below the limit a pending process instance is read from the disk, de-serialized and instantiated for execution.

This mechanism is effective but has you can understand from the previous explanations pretty slow.

So the first recommendation is the following :

R1 : do not use ‘Max Job’ (leave it to the value 0) and the related Activation Limit parameter (leave it unchecked) to control HTTP flows

The other mechanism ‘Flow Limit’ defines the maximum number of HTTP threads available to handle HTTP requests for a given Process Starter and then the maximum number of process instances that can be started within BusinessWorks for that Process Starter. This mechanism is very efficient and should be used to manage HTTP based flows. When the value ‘0’ is displayed in Administrated the default value 75 is used at runtime .

R2 : use Flow limit to control HTTP flows

Note that in the case you would see quiet often 75 process instances for a given process in the ‘BW Processes -> Active Processes’ panel that would mean that the default 75 instances are not enough and the Flow Limit parameter should be defined with an higher value (for example 100 or even 150).

This value can be defined in TIBCO Administrator and also in the XML deployment file used by AppManage. The default value 75 can be changed for all HTTP process starters of a given BusinessWorks engine at a time with the following property: bw.plugin.http.server.maxProcessors

What is less known is that it is possible to have BusinessWorks to reject incoming HTTP requests once Flow Limit is reached or about to be reached. This can be done using the bw.plugin.http.server.acceptCount property.

With the value 1 (and not zero) HTTP requests coming after Flow Limit is reached will be rejected with an HTTP 500 error. Meanwhile that could be a good idea to keep a small amount of incoming requests ready to be executed. By default 50 requests are queued.

With this property client applications may receive more HTTP 500 errors but this would avoid to have BusinessWorks and the back-ends to process requests with long delays leading to response times degradation and situations where requests are processed for client applications that already have timed out. This property applies to all process starters.

R3 : Set AcceptCount to a low value to get new HTTP requests rejected with an error 500 once the number of process instances reach the Flow Limit + Accept Count value for any HTTP based process starters in the BusinessWorks engine. The value 1 is to be used to get new requests rejected when Flow Limit is reached.

How to adjust the configuration to manage the outgoing HTTP request

While BusinessWorks is mostly used as a mediation layer exposing services to WEB Portals or mobile applications based on the orchestration of services exposed by Back-End applications, it is key for the exposed services to have enough resources available to access the back end services when needed.

The above applies to all kind of back-ends, for example for database accesses managed with the JDBC Query activity it is important to have enough JDBC connections available and for the invocation of HTTP based services it is important to have enough HTTP threads.

In this article I’am going to focus only on HTTP based back ends while BusinessWorks 5.X offers a number of useful configuration options in that area that are not well known while they are only available using properties.

First one important thing to know is that by default each HTTP client activity (HTTP Request Reply, SOAP Request Reply and Invoke REST API) has a dedicated thread pool of 10 threads to invoke HTTP based services and this means that only 10 service calls can be made simultaneously.

So if we see in the ‘Service Instances -> BW Processes -> Active Processes’ panel more than 10 instances of an HTTP client activity being active it means that 10 of them are waiting for a reply from the back end and the other ones are waiting for a thread to become available to be able to send an HTTP request.

The size of the default dedicated thread pool can be adjusted with the following property, note that this applies to all HTTP client activities : bw.plugin.http.client.ResponseThreadPool

In general it is recommended to set the value of this property to something taking into account the maximum number of instances expected for the considered process that is defined by Flow Limit as we discussed earlier, the number of HTTP client activities called in your process and the relative execution times of these activities. When you have multiple processes in the same project you have to align on the process that needs the highest value.

For example in a process with three HTTP client activities taking approximately the same time to execute we can assume that under a regular load one third of the process instances will be waiting on activity 1, another third on activity 2 and the last part of activity 3. So in that scenario a value of around one third of the Flow Limit value is then the optimal value for the ResponseThreadPool property (so a value of 25 or 30 seems to be appropriate if Flow Limit is set to 75).

In another example a process with three HTTP client activities with one of them taking approximately twice the time than each of the others to execute we can assume that under a regular load there will be half of the process instances waiting on the activity with the longest response time and the remaining process instances will be equally distributed across the two other activities. So in that scenario a value of around half of the Flow Limit value, to be able to manage the activity taking most of the time, is then the optimal value for the ResponseThreadPool property (so a value of 40 seems to be appropriate if Flow Limit is set to 75).

Meanwhile in the case our processes would be using many HTTP client activities to call different services this can lead to a situation where we would use a lot of resources to be able to invoke the services when needed. This can be managed by using a shared thread pool that can be enabled with the following property:

bw.plugin.http.client.ResponseThreadPool.type=single

R4 : If your processes use only a few HTTP client activities you can keep the default dedicated pool approach but it is recommended to increase the value of the following property : bw.plugin.http.client.ResponseThreadPool

The optimal value depends from the number of HTTP client activities you have, the relative response times of these activities and the value of Flow Limit. When you have multiple processes in the same project you have to align on the process needing the highest value.

R5 : If your processes use more than a few HTTP client activities it is recommended to use a shared pool bw.plugin.http.client.ResponseThreadPool.type=single

In that case the ResponseThreadPool is shared between all activities of all running process instances, possibly related to different services. It is then difficult to give a formula to calculate the optimal values for this parameter, it depends from how many process instances using HTTP client activities will be running simultaneously during peak times.

Some possible starting points are to set ResponseThreadPool to the value of the highest of the different Flow Limit values, or to the sum of all the Flow Limit divided by 2 or even to an arbitrary value like 100.

The key recommendation here is to do some tests with services that have tights volumes or performances requirements or both.

Using a shared pool also bring the opportunity to use the persistent connection manager that will increase the throughput if the back end WEB server support the ‘Keep Alive’ mechanism that is usually the case. This is especially useful when managing high throughputs (more that a few tens of requests per second), this is also useful to reduce the load on the network and firewalls infrastructure.

R6 : When using a shared pool and managing significant volumes this is a good practices to use the persistent connection manager. This can be enable with the following property for HTTP based configurations: bw.plugin.http.client.usePersistentConnectionManager=true

and for HTTPS configurations : bw.plugin.http.client.usePersistentConnectionManagerForSSL=true

In the case a shared pool with persistent connections is used there are two properties available to define the size of the permanent connection pool :

. Number of connections per host with the property : bw.plugin.http.client.maxConnectionsPerHost (default value is 20)

. Total number of connection with the property : bw.plugin.http.client.maxTotalConnections (default value is 200)

In case you have multiple services exposed in the same BusinessWorks project it is important to keep in mind that this pool will be shared between them.

Again it is difficult to give some kind of formulas to calculate the optimal values for those parameters, it depends from :

. How many process instances using HTTP client activities will be running simultaneously during peak times (related to the number of services and the related Flow Limit configuration)

. The number of hosts involved in the mediations

. The average duration of service calls to a given host

Good starting points are to set maxConnectionsPerHost to the value of the highest of the different Flow Limit values, or to an arbitrary value like 100, and set maxTotalConnections to the same value multiplied by the number of hosts involved in the mediations.

Again this is important to do some tests with services with tights volumes or performances requirements or both.

R7 : When using a shared pool with persistent connections the number of persistent connections has to be defined using the two following properties : bw.plugin.http.client.maxConnectionsPerHost and bw.plugin.http.client.maxTotalConnections

R8 : When using a persistent connection manager you can enable or disable the following property to make the configuration more reliable when enabled (generally recommended) or more performing when disabled :

bw.plugin.http.client.checkForStaleConnections

Example of a production configuration using a shared pool and the persistent connection manager (with 2 hosts) :

bw.plugin.http.client.ResponseThreadPool.type=single

bw.plugin.http.client.ResponseThreadPool=100

bw.plugin.http.client.usePersistentConnectionManager=true

bw.plugin.http.client.maxTotalConnections=200

bw.plugin.http.client.maxConnectionsPerHost=100

bw.plugin.http.client.checkForStaleConnections=true

With that configuration we are able to sent 100 HTTP requests simultaneously to each host and we are leveraging the Persistent Connection mechanism.

Other useful elements

# Useful reference elements on HTTP properties

Details about the mentioned HTTP properties are available here in the TIBCO documentation.

How to make the mentioned properties visible in TIBCO Administrator and AppManage

The mentioned properties can be exposed in TIBCO Administrator and AppManage by using the solution described here in BusinessWorks 5.X documentation.

# Other properties of interest for HTTP flows

To avoid possible problems when an HTTP load balancer is used in front of Businessworks this is a common practice to set the following property to localhost to match the default host configuration element of HTTP Connection Shared resource:

bw.plugin.http.server.defaultHost=localhost

The following properties are useful to manage list of IP addresses that are allowed (or not) to call the exposed services (‘white list management’) :

bw.plugin.http.server.allowIPAddresses and bw.plugin.http.server.restrictIPAddresses

# How to ensure stable response times

By default BusinessWorks is using the default Java Garbage Collector that is single threaded and blocks processing during Garbage collection (for more details search on Google for ‘Java end of world garbage collection’).

While recent versions of BusinessWorks 5.X are using Java 1.8 it is now possible to use the multi-threaded garbage collector G1GC is ensure response times are not impacted by garbage collections (available on Linux and Windows).

This can be done with the following :

. Add the following line in bwengine.tra on all BW servers (or edit the value of the property if already present) :

java.extended.properties= -XX:+UseG1GC

. redeploy all your BW applications

Important things to keep in mind as a conclusion

# BusinessWorks is scalable …

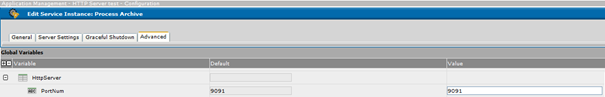

This is common to have two BusinessWorks engines running in a load balanced configuration on two servers, but it is also possible to have four engines running on two servers (with two engines per server). To do this, Global Variables used to define HTTP port values should be defined with the ‘Service’ option like this :

Then at deployment time the port number can be defined in TIBCO Administration Configuration -> Service Instance panel in the Advanced tab :

# Testing is very important ..

To adjust the property values do some tests to understand the behavior of the configuration and do multiple iterations of testing.

# You can insulate a service …

To have full control on a given HTTP service configuration it is sometimes useful to insulate this service on a dedicated BusinessWorks engine.

# Think of the impact for the Back-ends …

Once the BusinessWorks configuration has been adjusted keep in mind this is likely that you are going to send more requests to the back-ends and they may have difficulties to deal with the extra load. It could be wise not to increase to quickly the number of connections to invoke the back-ends. If the back-ends have difficulties to handle the load of incoming requests you might need to limit the flows before they reach BusinessWorks.

# Think architecture ..

Keep in mind also that things can be improved by improving the design of the flows .. Processing can be parallelized in a BusinessWorks process, asynchronous messaging can help in processing events that don’t need an immediate answer also it might not be needed to log all events related to HTTP services just doing queries.

I hope the elements provided will help you improve the handling of your HTTP based services with reduced response times and increase throughput.

Recommended Comments

There are no comments to display.

Create an account or sign in to comment

You need to be a member in order to leave a comment

Create an account

Sign up for a new account in our community. It's easy!

Register a new accountSign in

Already have an account? Sign in here.

Sign In Now