If you work in an environment, where you have to manage high volume JMS messages flows, I mean flows with high throughput’s or large messages or both, and response times, or latency, are a concern you might have already noticed strange behaviours in BusinessWorks.

For example, you may have noticed the following:

. On a given queue short messages, supposed to be processed faster than large messages, are sometimes processed in the same time, or even a bit more, than large messages

. You can see some messages are pending in the input queue but not all available BusinessWorks threads are working

. In load balanced BusinessWorks engines configuration you noticed that sometimes you have messages pending in the input queue but only one engine is busy

This is more visible when you manage use cases where message processing takes more than a few seconds.

It has been verified already that the well known JMS Receiver best practices are in place (use Client ack and use Max Session to control the number JMS receiver threads in BusinessWorks) and you still can’t explain why JMS messages load balancing is not managed in an optimal way.

The EMS prefetch queue and topic property

The explanation is not in the BusinessWorks layer but in the EMS layer. In fact this is because of the ‘prefetch’ property of EMS destinations (queues and topics).

When the prefetch property is set messages are delivered in batches to consumer threads, a batch of messages for each thread, so in practice we have per threads :

. one message being processed

. ‘prefetch value — 1’ messages already delivered to the consumer applications, waiting to be processed and not available for other threads (until the previous message is confirmed or the thread is closed)

Doing a test to check the prefetch behaviour

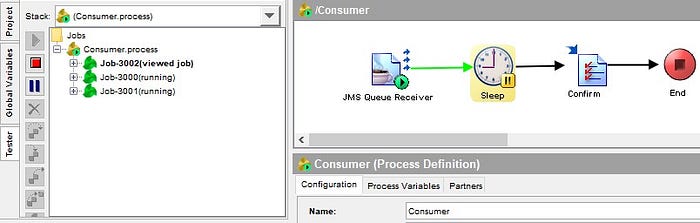

To see this behaviour you can create the following BusinessWorks application :

. A simple JMS Consumer process using the best practices mentioned above (configured for Client Ack and MaxSession = 10)

. The process will simply sleep for 10 minutes and then confirm the JMS message (to simulate a long lasting process execution)

. The input queue will use the default EMS queue configuration

Before starting the application put 12 messages in the input queue with GEMS and think to what you expect to happen. I bet you think 10 threads will start executing and 2 messages will be pending in the queue waiting for a thread to terminate and get processed.

In reality, you will see only three threads executing and 9 messages pending.

Why ? Because by default EMS queues are configured with ‘prefetch = 5’.

So basically what is happening at application start-up is the following:

. One thread gets 5 messages, one is processed, the 4 others are waiting to be processed

. Another thread gets 5 messages, one is processed, the 4 others are waiting to be processed

. Another thread gets the 2 remaining messages

How to ensure smooth load balancing of JMS messages

To avoid the behavior described above you just have to disable the ‘prefetch’ mechanism by setting this property value to the value ‘none’ (or ‘0’ or ‘1’).

This can be done in ‘tibemsadmin’ with the following command:

addprop queue <Queue.Name> prefetch=none

After updating our test input queue configuration with prefetch=none we now see the expected behavior:

Possible impacts of disabling the prefetch mechanism

With prefetch mechanism disabled messages are delivered one by one to Consumer applications, this creates a little overhead in the EMS server and also there will be a network overhead for each message (instead of ‘for each message batch’). The effect is limited if the BusinessWorks servers are in the same datacenter as the EMS servers but still could impact the EMS server in high volume environments, this is why it is recommended to adjust the ‘prefetch’ parameter only for queues that handle messages that have a significant processing time in BusinessWorks (say more than a few 10’s of seconds). In a context where BusinessWorks servers are in a remote datacentre connected to the EMS servers thru a WAN with a relatively high latency network you might need to do some tests to check the impact.

Another impact is that it may increase the load on the BusinessWorks servers, while now all threads will be working, so in a production environment it is wise to not disable the prefetch property for all queues at the same time.

Summary

If you are in context where you have to manage JMS messages flows that have a significant processing time in BusinessWorks (say more than a few 10’s of seconds) and you need to ensure no latency in introduced in the BusinessWorks layer it is a best practice to disable the ‘prefetch’ property for the corresponding JMS queues and topics.

This obviously applies to both BusinessWorks 5.X and BusinessWorks 6.X and any TIBCO EMS Consumer application.

Reference

EMS documentation on prefetch property :

https://docs.tibco.com/pub/ems/10.2.1/doc/html/Default.htm#users-guide/prefetch.htm

-

1

1

Recommended Comments

There are no comments to display.

Create an account or sign in to comment

You need to be a member in order to leave a comment

Create an account

Sign up for a new account in our community. It's easy!

Register a new accountSign in

Already have an account? Sign in here.

Sign In Now